I Spent Weeks Blaming Claude Code. The Problem Was My Pipeline. | Igor Gridel

For weeks I tried to design a landing page for Scopefull using only AI. I do not enjoy designing from scratch. I know what I like when I see it, but opening Figma and building something from nothing is a different skill than spotting when something is off, and I do not have the first one. Handing it to a coding agent was the obvious move.

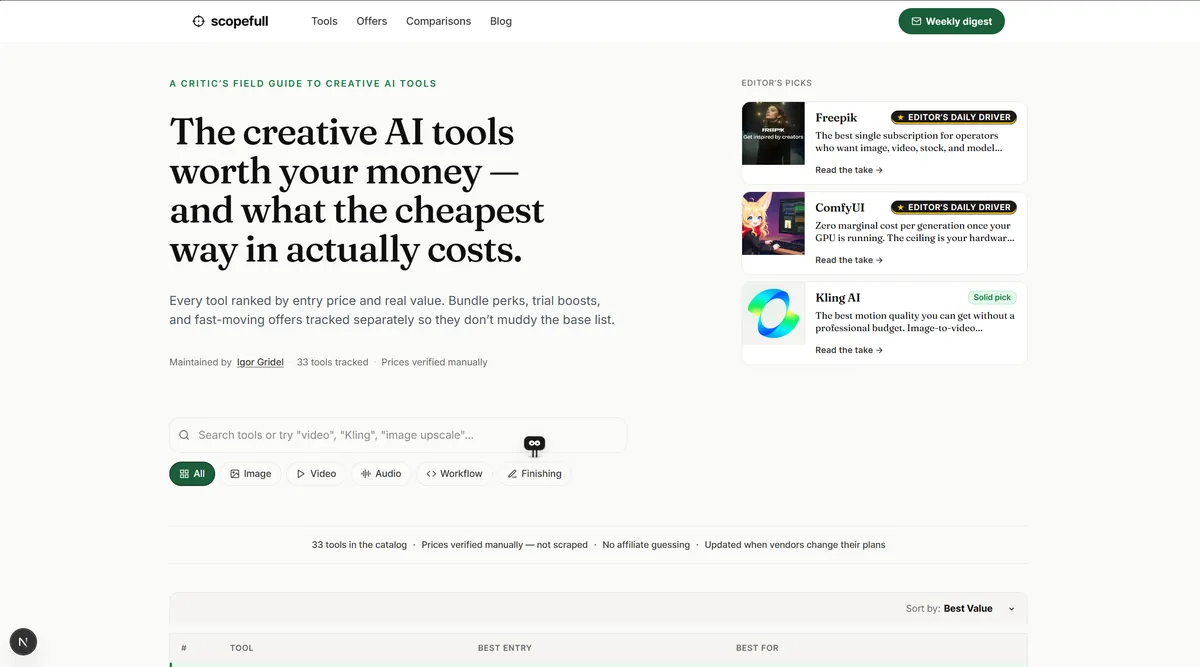

The first attempt came back from Claude Code. A clean directory layout with the headline "The creative AI tools worth your money and what the cheapest way in actually costs." There was an Editor's Picks sidebar on the right with three cards labelled Freepik, ComfyUI, Kling AI. Category tabs underneath. A sort-by-best-value dropdown. Thirty-three tools in a table below the fold.

It was not bad. It was also exactly what a thousand other AI tool directories already look like. The H1 hedged with "what the cheapest way in actually costs," which is a sentence nobody has ever typed into a search bar. It was a competent directory layout and a dead landing page.

I moved to Codex. Similar result. Then Google AI Studio, same pattern. I tried Claude Code with Paper Canvas MCP, which is supposed to be the more design-focused one, and got a version that looked different in the specific way a tool trying hard to look different always looks different.

At that point I had four agents, four tabs open, and zero landing pages that answered the question my users were actually arriving with. My first instinct was to blame the models. Design must be the thing these agents cannot do yet. I should wait for the next release.

That was the wrong story.

## The actual problem

The coding agents were not bad at design. I was asking them to do four jobs at once.

Every one of those prompts was really asking a single tool to understand the product, produce a spec, pick a visual direction, and write the code, all in one pass. Models do not get sharper when you stack jobs onto them. They average. That is why every landing page came back looking like the statistical mean of every landing page the model had seen. It was not a design. It was a midpoint.

The fix was to stop treating "build the landing page" as one request. It is three stages, and each stage wants a different tool.

## Stage one. Specs in Kiro.

I opened Kiro, described Scopefull, and let it generate the three documents it is good at: `requirements.md`, `design.md`, `tasks.md`. What came back was the best spec I have ever had for a personal project. Structured, specific, written like a product manager actually thought about the thing before the keyboard came out.

Then I tried to let Kiro do the coding too.

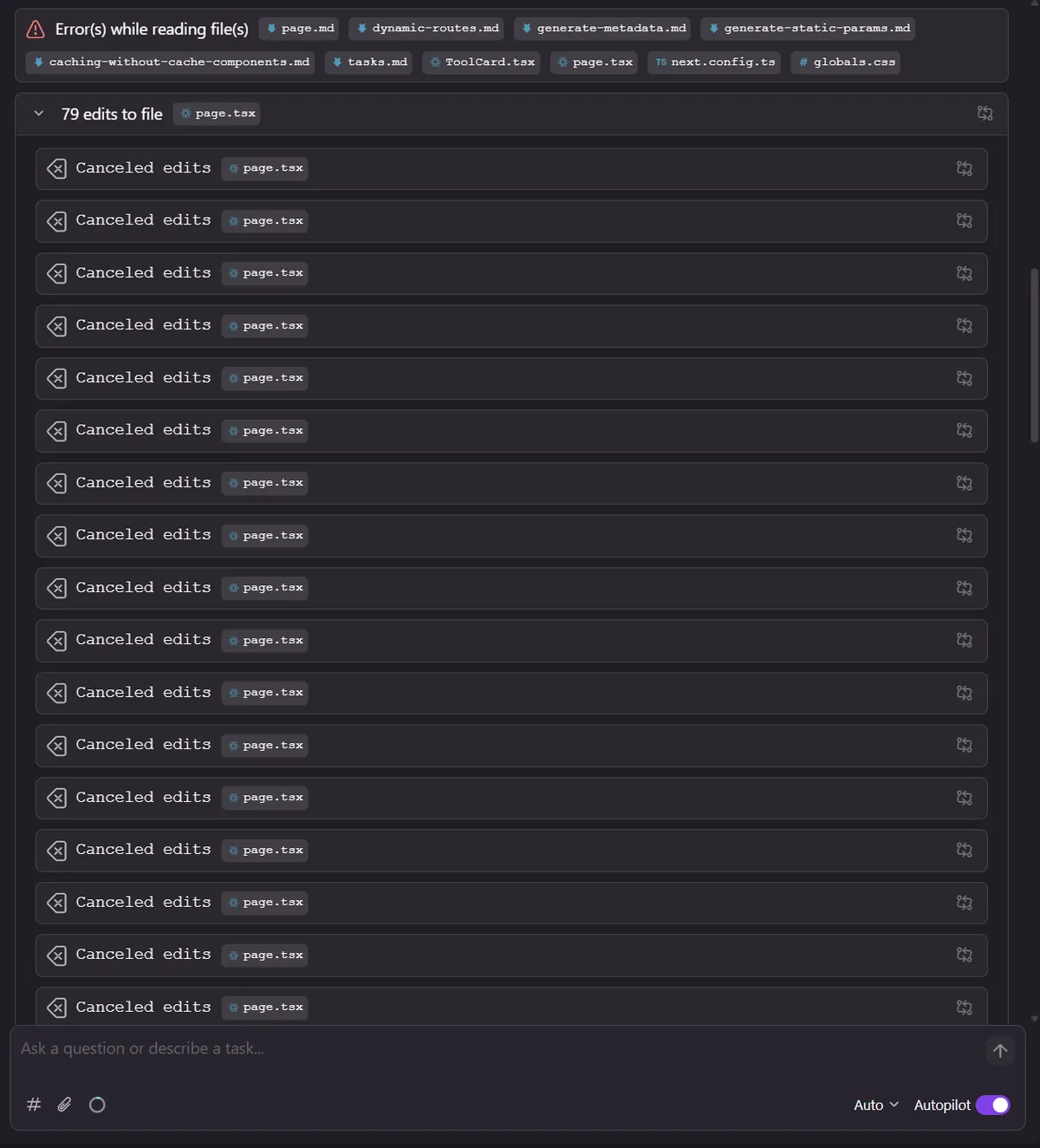

What you are looking at is Kiro trying to edit one file, `page.tsx`, seventy-nine times in a row. Every attempt cancelled. "Error(s) while reading file(s)" sits across the top with ten filenames it never actually read. I have no idea what it was trying to do. It did not finish any of it.

I will keep using Kiro for docs and never touch its coding side again. That is a tool used for what it is actually good at, not what the homepage promises.

## Stage two. Mockups before code.

I took the Kiro docs into Claude Code. Before a single line of Code, I asked Claude for a few design directions described in prose. Not components, not code, just different visual takes on the site.

For each direction I used NanoBanana Pro to generate an actual image of what the landing page would look like. Real hero layout, real spacing, real type treatment. I picked the one that felt right and only then moved to code.

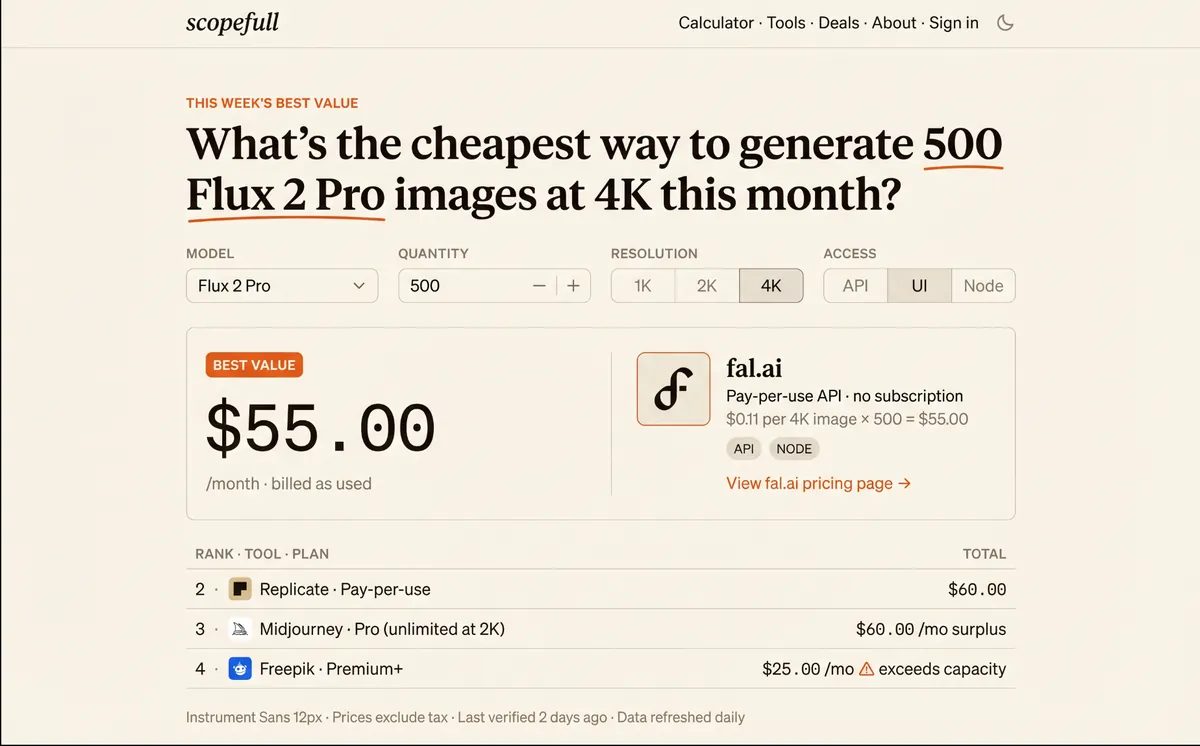

The mockup did one thing no directory design can do. It asked the question the visitor was already asking in their head. "What's the cheapest way to generate 500 Flux 2 Pro images at 4K this month?" Form underneath: model, quantity, resolution, access type. Giant answer in the orange BEST VALUE card. $55.00 a month, fal.ai, pay-per-use, $0.11 per 4K image times 500. Ranked alternatives below it.

Still not ideal, but that's a good starting point.

Before I was doing this, I had been asking one tool to both invent the design and implement it, which meant the design always came out as the statistical mean. Generating the mockup as an image first forces the decision to happen in a medium where I can actually see it and react. When I finally go to Claude Code, I am not deciding and implementing at the same time. I am implementing one specific direction I already picked.

## Stage three. One feature at a time.

The boring stage that matters most. Instead of asking Claude for the entire landing page in one shot, I picked one feature, built it, viewed it in the browser, fixed what was off, and committed. Then I moved to the next one.

Every time I had tried the one-shot approach, the agent got seventy percent of it right and thirty percent slightly wrong in ways that compounded. By the end of the session I had a landing page I then had to argue with for two hours to unwind. One feature at a time removes the compounding. Each piece stands on its own, each piece gets attention, and if something is off I fix it now, while I still remember why it was off.

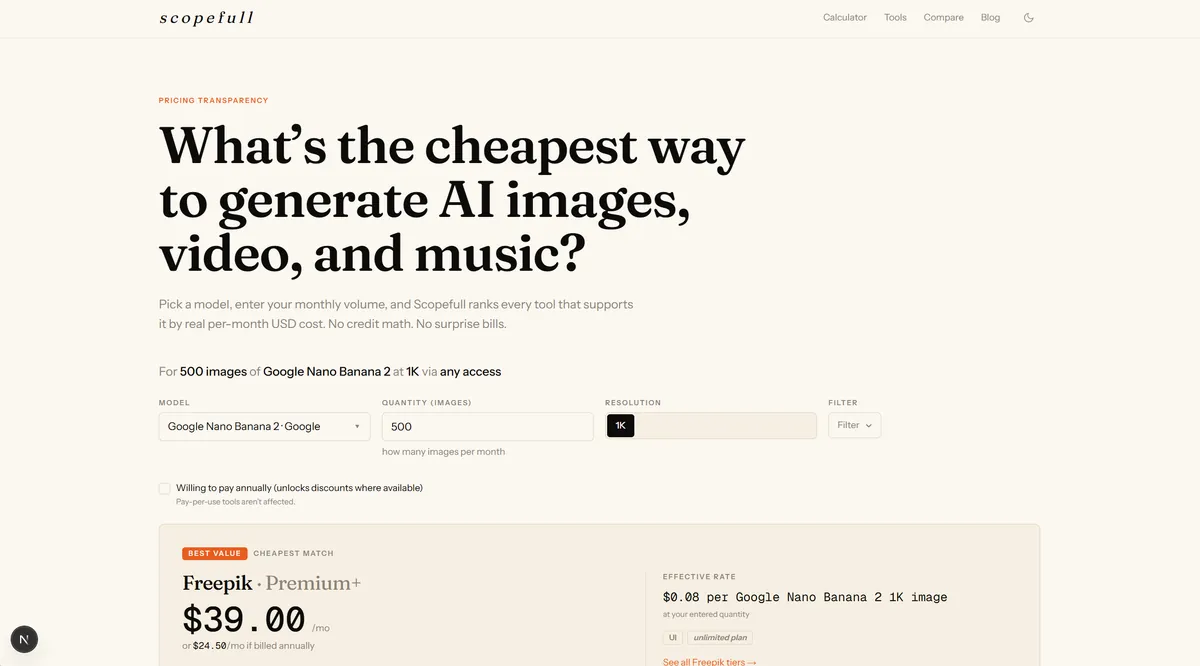

The latest not final version keeps the mockup's question-first spine and swaps in a real query that fits the MVP. "What's the cheapest way to generate AI images, video, and music?" Pick a model. Enter your monthly volume. See the cheapest match, including the effective per-image rate and whether the "unlimited" plan actually holds at your quantity. The first query example baked in is 500 images of Google Nano Banana 2, and the answer is Freepik Premium+ at $39 a month, or $24.58 if billed annually, at $0.08 per image.

## What Scopefull actually does

Scopefull answers one question for people buying creative AI tools: what is the cheapest way to do this specific thing right now.

The reason it needs to exist is that pricing pages for creative AI tools are genuinely one of the worst categories of web in 2026. Hidden credits, fake unlimited tiers that throttle above a quantity nobody tells you, tokens that are not actually tokens, the same model priced three different ways across three resellers. I have been testing every one of them by hand and writing the math down per image, per resolution, per model, per month. That math is the actual product.

The landing page was the front door. I was stuck on the front door for weeks. It shipped once I stopped treating it as one problem and started treating it as three.

The models were fine the whole time. I was running them wrong.

P.S. Scopefull still work in progress.